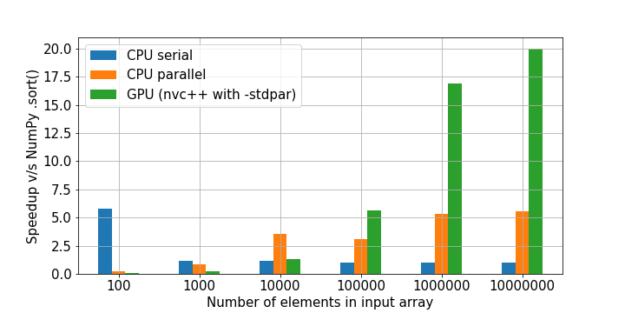

Numpy on GPU/TPU. Make your Numpy code to run 50x faster. | by Sambasivarao. K | Analytics Vidhya | Medium

Kompute v0.8.0 Released: Numpy Optimized General Purpose GPU Accelerated Compute for Cross Vendor Graphic Cards (AMD, NVIDIA, Qualcomm & Friends). Adding Convolutional Neural Network (CNN) Implementations, Edge-Device Support, Variable Types Extension ...

Backpropagation fails after moving tensor from GPU to CPU (numpy version) - autograd - PyTorch Forums