Reduce ML inference costs on Amazon SageMaker for PyTorch models using Amazon Elastic Inference | AWS Machine Learning Blog

![P] SpeedTorch. 4x faster pinned CPU -> GPU data transfer than Pytorch pinned CPU tensors, and 110x faster GPU -> CPU transfer. Augment parameter size by hosting on CPU. Use non sparse P] SpeedTorch. 4x faster pinned CPU -> GPU data transfer than Pytorch pinned CPU tensors, and 110x faster GPU -> CPU transfer. Augment parameter size by hosting on CPU. Use non sparse](https://external-preview.redd.it/HXaD9AXcJOYhOEi1lQmyu3EPPVIozvFqLonNGQiL5vU.png?width=640&crop=smart&auto=webp&s=28f75dce306c09a64d07705f4ec726f486e45120)

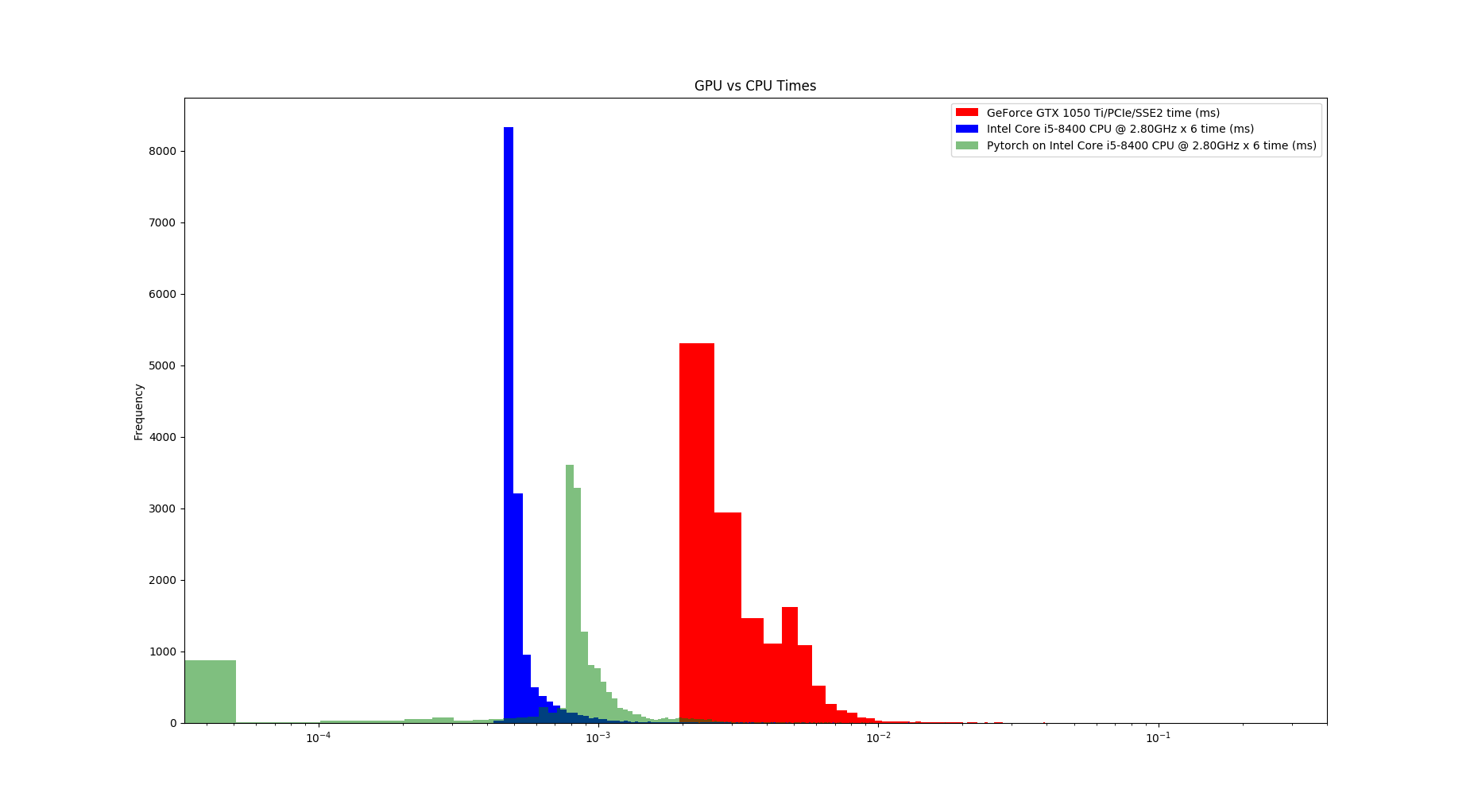

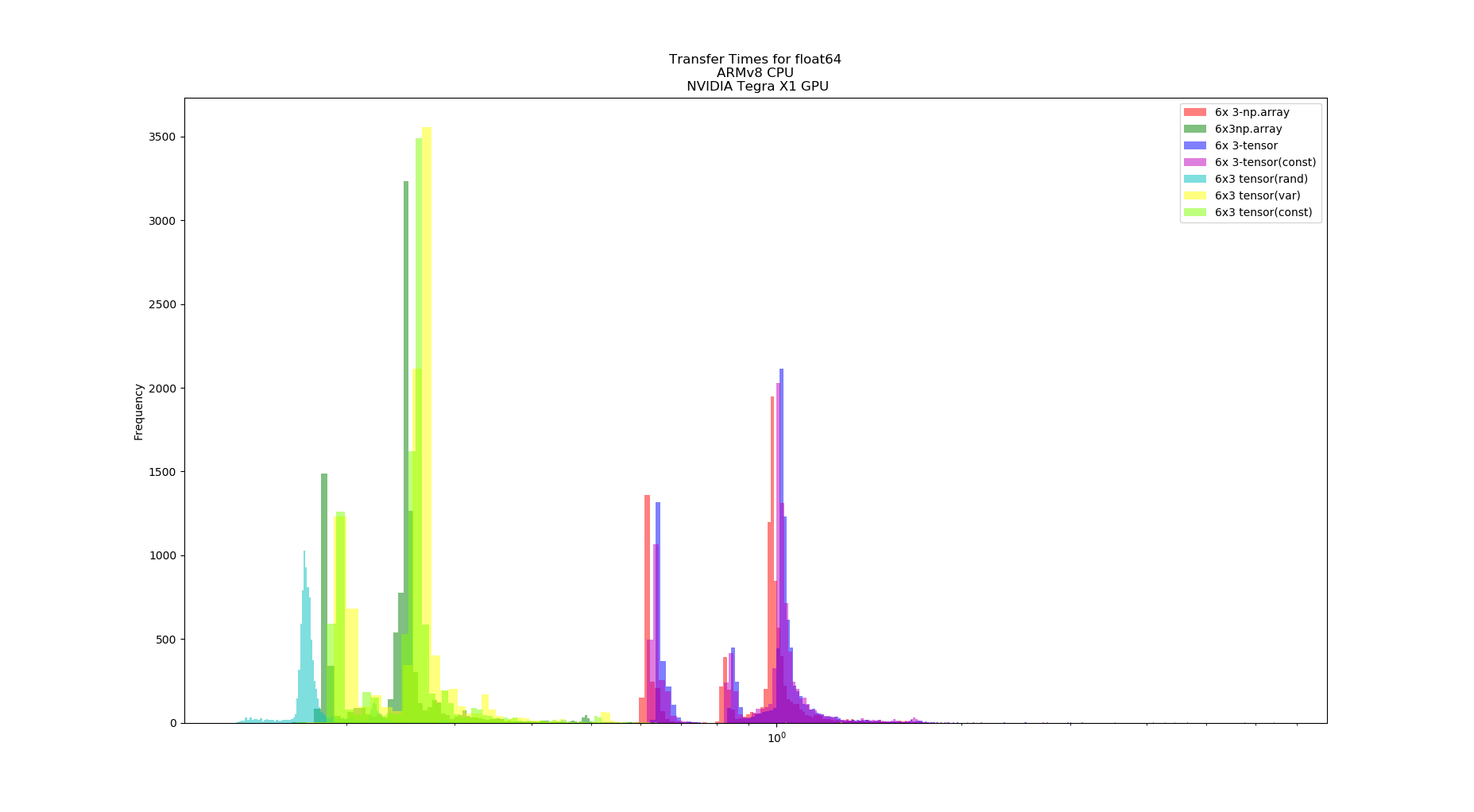

P] SpeedTorch. 4x faster pinned CPU -> GPU data transfer than Pytorch pinned CPU tensors, and 110x faster GPU -> CPU transfer. Augment parameter size by hosting on CPU. Use non sparse

Introducing PyTorch Profiler – The New And Improved Performance Debugging Profiler For PyTorch - MarkTechPost

PyTorch: Switching to the GPU. How and Why to train models on the GPU… | by Dario Radečić | Towards Data Science

Improved performance for torch.multinomial with small batches · Issue #13018 · pytorch/pytorch · GitHub

PyTorch-Direct: Introducing Deep Learning Framework with GPU-Centric Data Access for Faster Large GNN Training | NVIDIA On-Demand

![D] How to avoid CPU bottlenecking in PyTorch - training slowed by augmentations and data loading? : r/MachineLearning D] How to avoid CPU bottlenecking in PyTorch - training slowed by augmentations and data loading? : r/MachineLearning](https://external-preview.redd.it/1SY1rAjr3waT6K1wDaenkeNjjmgGGmhl8p1HSxrsCtY.jpg?auto=webp&s=1bab9cd9ae9d347b93ada957732654a339db0580)